What CEOs Should Know Before Signing an AI Agent Contract

By sundae_bar

AI agent vendors are having their best sales year in history. Enterprise budgets are moving, boards are asking questions, and the pressure to show progress is real. That's exactly when the wrong contract gets signed. Here's what to know before you commit.

The Market Has Moved Faster Than the Contracts Have

Enterprise AI agent spending reached $37 billion in 2025, up from $11.5 billion the year before — a 3.2x increase in a single year. That velocity is impressive. It also means procurement frameworks, contract standards, and vendor accountability norms haven't kept pace with the technology.

Most enterprise buyers are evaluating AI agents with the same procurement lens they'd apply to a SaaS subscription. That's the wrong lens. An AI agent that operates autonomously across your business workflows is a fundamentally different category of commitment — and the questions you ask before signing should reflect that.

Who Actually Owns Your Data?

This is the first question and the most important one. When your AI agent processes your customer records, internal communications, proprietary workflows, and business data — who owns that data, and what can the vendor do with it?

Enterprise data ownership is already creating legal disputes at scale. Salesforce raised prices on apps that access its data. Celonis sued SAP over data access terms. Connection fees and data access rights are becoming contested territory as AI agents become more deeply integrated into business operations. Your contract needs explicit terms on data sovereignty before anything else.

Ask directly: Does the vendor use your data to train or improve their models? What happens to your data if you terminate the contract? Who is liable if your proprietary data is exposed? If the answers aren't specific and in writing, treat that as a red flag.

What Does the Pricing Actually Scale To?

AI agent pricing models are in flux, and the gap between what vendors quote at contract signing and what deployment actually costs at scale is significant. Gartner forecasts that enterprise software spend will rise at least 40% by 2027 with generative AI as the primary accelerant — in large part because consumption-based AI pricing routinely reveals 500–1,000% cost underestimation when pilots move to production.

The attractive pilot price is not the production price. Understand the pricing model in detail before signing: Is it per seat? Per action? Per API call? Per agent? What happens to costs when usage doubles? When you add departments? When the agent handles high-volume workflows?

CFOs and CIOs pushed back hard on consumption-based models in 2025 precisely because unpredictability made budgeting impossible. Demand a pricing structure you can model at scale before you commit.

What Happens When the Agent Gets It Wrong?

Every AI agent will make mistakes. The question isn't whether errors will occur — it's what happens when they do, and who is responsible.

As AI agents begin executing code, signing contracts, and booking transactions independently, the question of who is liable for autonomous errors remains largely unresolved in both law and vendor contracts. Most enterprise AI contracts place liability firmly with the customer. You should know exactly what your exposure is before the agent takes any action on your behalf.

Ask: What are the escalation protocols when the agent produces an incorrect output? What audit trail exists for agent decisions? What remediation does the vendor offer? And crucially — does the contract indemnify the vendor against consequences of agent errors? Read this section carefully.

Is This a Pilot Dressed Up as a Product?

Only 14% of enterprises have production-ready AI implementations despite 62% actively experimenting. A significant share of what's being sold as "enterprise AI agents" in 2026 is still fundamentally demo-grade technology being positioned as production-ready.

Specific things to probe: Has the agent been deployed in a production environment at a company of comparable size and complexity to yours? Not a pilot. Not a proof of concept. An actual live deployment that's been running for at least six months, handling real volume, with measurable outcomes you can verify. Ask for references and speak to them directly.

The Snowflake CEO noted that in 2026, enterprise organizations will insist on systematic methods to measure AI reliability before deploying at scale — because business-critical applications require exact answers, not probabilistic ones. Your contract should specify how the agent's performance is measured, what the benchmarks are, and what recourse you have if it doesn't meet them.

What's the Exit Look Like?

Lock-in risk is real and often underestimated at the point of signing. Once an AI agent is integrated into your workflows, connected to your systems, and trained on your data, switching becomes expensive and disruptive — which is exactly the leverage position vendors are building toward.

Ask before you're in: What does migration off the platform look like? What format is your data exportable in? Are there termination fees? How long does transition take? What access do you retain to agent configurations and training data you've contributed?

A vendor with nothing to hide will answer these questions straightforwardly. One that deflects or buries the answers in contract language is telling you something important.

The Right Contract Starts With the Right Vendor

PwC research found that AI success in 2026 requires a top-down enterprise strategy — not crowdsourced pilots that leadership tries to shape retroactively into something coherent. The CEO's job is to make sure the vendor relationship reflects where the business is actually going, not just where the most recent internal champion pushed the evaluation.

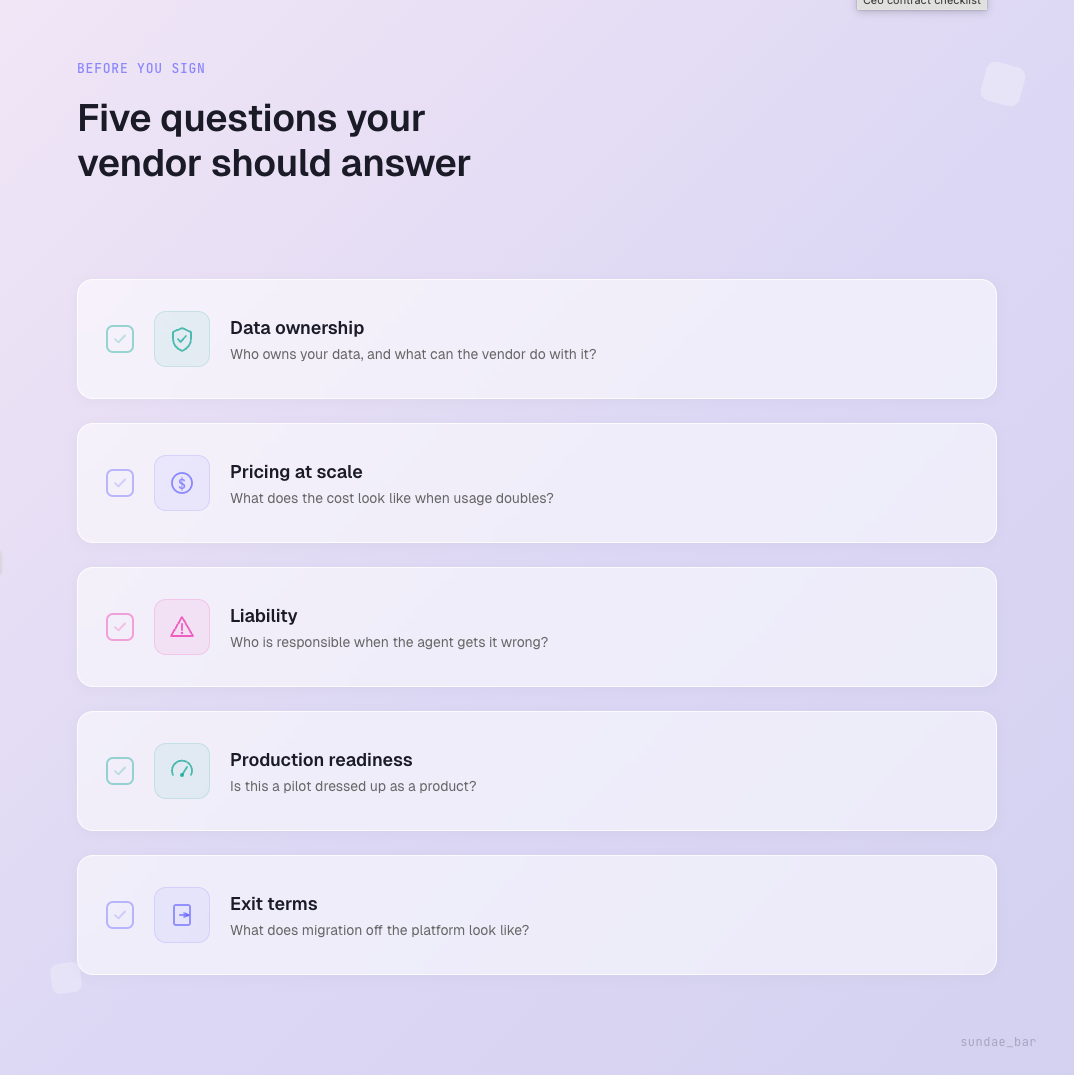

That means asking the hard questions before signing — on data, pricing, liability, production readiness, and exit terms. Vendors who are genuinely ready for enterprise deployment will have clear answers. Vendors who aren't will become apparent very quickly.

At sundae_bar, the generalist agent is built for production environments from day one — open-source framework, structured evaluation through SN121, clear data boundaries, and deployment support that doesn't disappear after the contract is signed.

Talk to the team before you sign anything else at sundaebar.ai/enterprise